AI Recruitment Tools: GDPR, EU AI Act and Corporate Liability | PSL Avocat

AI hiring tools, candidate scoring, human-in-the-loop: what GDPR and the EU AI Act require from companies and recruitment firms operating in Europe.

PSL Avocat

4/12/20264 min read

AI in recruitment: if a candidate, a union or a regulator asked you the question today, could you defend your tools?

Philippe Sigal — April 9, 2026

In most conversations I have with HR directors and recruitment firms, the honest answer is no.

Not for lack of diligence. Because of speed.

The tools were deployed. The legal analysis did not follow.

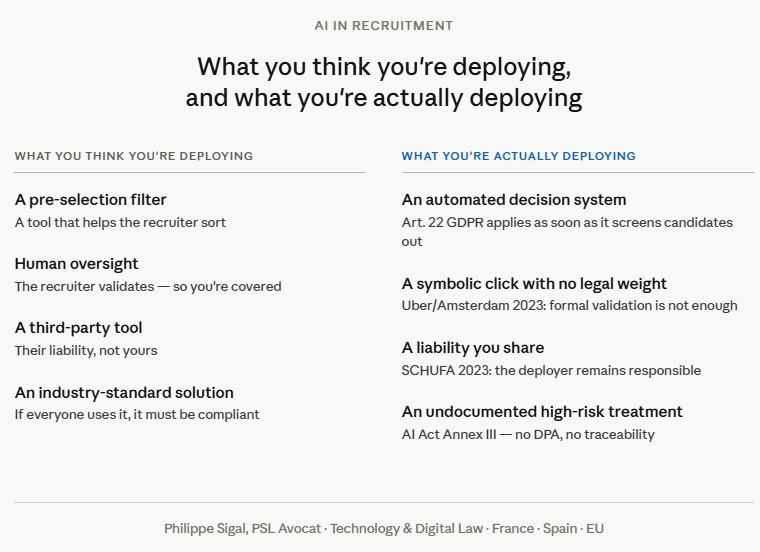

What you are actually deploying

When you activate an AI recruitment tool, you are not deploying a filter.

You are deploying a decision system that sorts and ranks candidates, screens some out, based on algorithms you do not control, trained on data you have not audited, sometimes hosted outside the EU.

Under EU law, this qualifies as automated decision-making in an employment context. This is one of the most sensitive legal frameworks in existence.

And if you are an external recruitment firm: you are not a simple intermediary. You enter the liability chain. The question of who is responsible for what is rarely settled in contracts. It needs to be.

What the law already says: three key decisions

1. CJEU, 7 December 2023, SCHUFA Holding (C-634/21)

A landmark ruling by the Court of Justice of the EU on Article 22 GDPR. The question: does a score automatically produced by an algorithm, on which a third party relies heavily to decide, constitute an "automated decision"?

Answer: yes. Even if a third party makes the final decision.

➡️ If your tool determines which candidates reach the recruiter, you fall within the scope of Article 22.

2. Amsterdam Court of Appeal, 4 April 2023, Drivers v. Uber and Ola (the "Robo-Firing case")

Uber argued it had "humans in the loop" to validate algorithmic decisions. The Court rejected this argument: the validation was "nothing more than a purely symbolic act." Decision unlawful under Article 22 GDPR. In October 2023, having failed to comply, Uber was ordered to pay €584,000 in penalties.

➡️ The human click no longer protects you. Oversight must be real, documented, and capable of changing the outcome.

3. Spanish Audiencia Nacional, 4 July 2025, CGT v. Foundever Spain (case 182/2025)

The company denied using algorithms in HR management. Two prior decisions from the same court had already established the contrary. The Court declared the practice null and void, awarded compensation for breach of trade union rights, and ordered disclosure of the information.

➡️ Denying the existence of an HR algorithm when one exists is an independent legal fault. The risk is legal, social and reputational.

What the regulators say

France's CNIL and Spain's AEPD have both published clear positions on the use of AI in HR. Their conclusions converge: genuine, non-symbolic human oversight is required for any significant decision. This is precisely the formulation adopted by the Amsterdam Court of Appeal.

What I see in practice

In conversations with HR directors and legal teams, the same blind spots recur: tools deployed without consolidated mapping or legal qualification, purely formal human-in-the-loop, supplier contracts with no DPA or AI Act clause, no decision traceability.

In this state, most setups would not withstand a candidate access request, a union formal notice, or a data protection authority audit.

GDPR + AI Act: you are already exposed

Three obligations most organisations are not managing.

Legal basis: consent is almost unusable in a recruitment context, due to the structural power imbalance. Legitimate interest is possible, but conditional on a formalised analysis most have not conducted.

Article 22 GDPR: since the SCHUFA ruling, a tool that scores or ranks candidates in a determinative way falls within this scope, even if a human validates the outcome.

Candidate information: do your candidates know that an algorithm is evaluating them, that they can request human intervention, that their data is processed by a third-party provider outside the EU? Probably not.

On the AI Act side (Regulation EU 2024/1689, in force since August 2024): recruitment is classified as a high-risk system (Annex III).

The Digital Omnibus trap

The European Commission published a legislative package in November 2025 that will likely defer certain AI Act obligations and harmonise some DPIA requirements.

Many read this as a green light to wait.

That is a misreading. Recruitment will remain classified as high-risk. GDPR applies in full today. And the risk does not disappear: it shifts from the regulator to litigation. And it grows, precisely because the framework is becoming clearer.

In 18 months

The question will no longer be "are you using AI in recruitment?"

It will be: "can you prove you are using it lawfully?"

Candidates screened out by algorithm are beginning to challenge decisions. Large organisations are imposing AI compliance clauses in their contracts with HR providers. In Spain, unions have a statutory right of access to algorithm parameters — and they are using it.

AI compliance in recruitment is not an administrative constraint. It is a condition of market access, and soon a clause your clients will require you to satisfy before signing.

How to act

For organisations concerned, I advise on three types of matters.

Legal mapping of AI tools deployed in the recruitment chain: qualification under GDPR and the AI Act, identification of priority risks, clarification of the liability chain.

Review and update of supplier contracts: DPA, AI Act compliance clauses, contractual allocation of responsibilities.

Candidate documentation: privacy notices, information notices, management of access and challenge rights.

This is not compliance for compliance's sake. It is what allows you to answer "yes" when the question is asked.

If this concerns you, let's talk.

ConTACT

RegistrATION

contact@pslavocat.com

+34-672-939-146

© 2025. All rights reserved.

Attorney at Law , Paris Bar

Avocat à la Cour, inscrit au Barreau de Paris

+33-7-71-06-80-60

Philippe Sigal